Inputs: what you invest

Inputs are the resources that go into the program: staff time, funding, materials, technology, partnerships, facilities. The test for an input is the question "would the program stop if this disappeared?" If yes, it belongs here. Inputs rarely get measured in M&E because they're tracked in budgets and HR systems, but they belong in the ToC so the resource bet is visible.

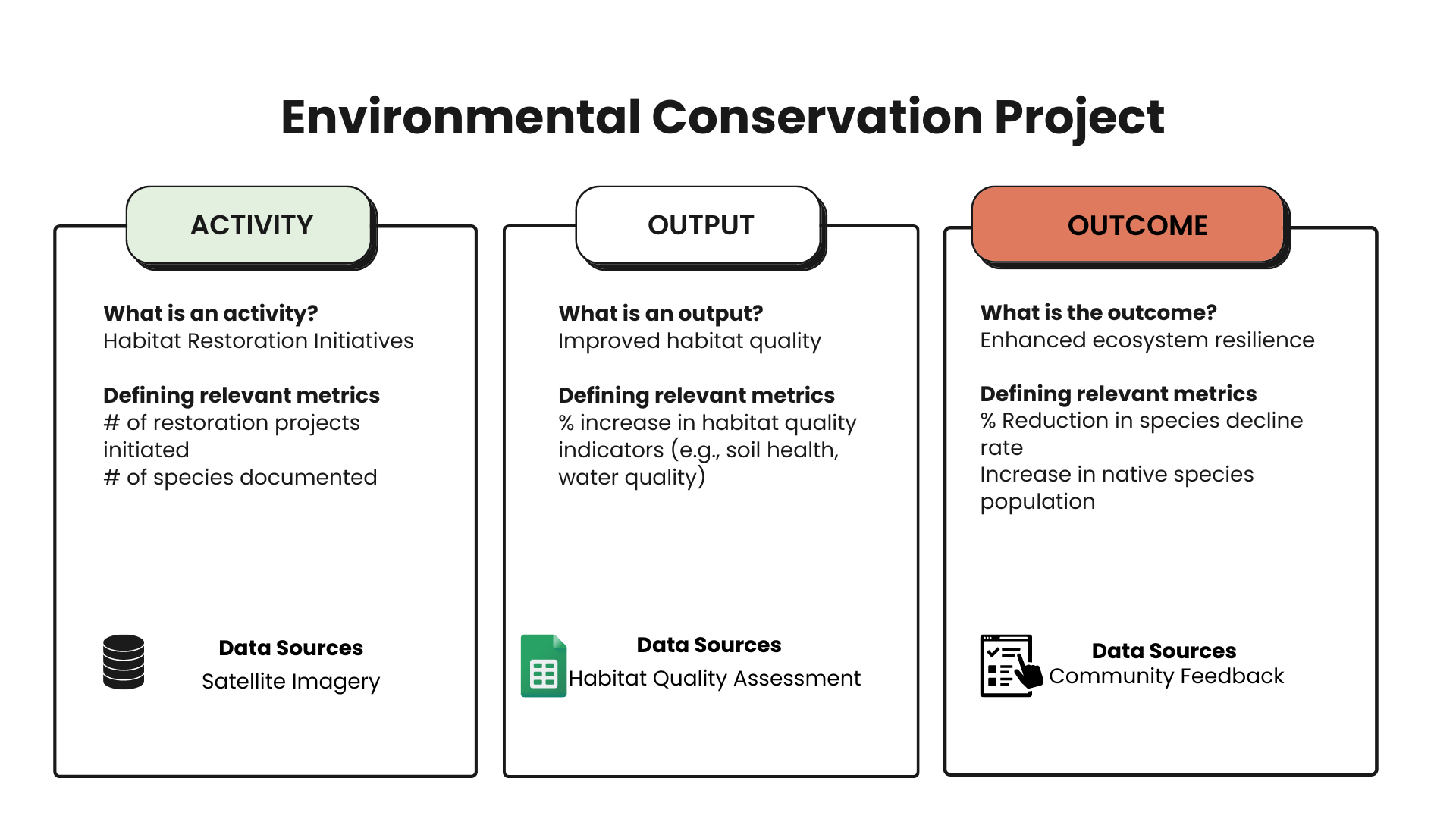

Activities: what the program does

Activities are verb-led. Training, coaching, screening, distributing, convening, advocating. The test is whether you could point at staff doing the activity in a given week. Activities are where program teams spend most of their thinking time. They are also where outputs get measured, which is why so many M&E systems collapse activities and outputs into one column. Resist that.

Outputs: countable products

Outputs answer "how many." 120 trainees, 600 screenings, 40 grants awarded. They are the direct, countable products of activities. Outputs are the easiest component to measure and the most overweighted in funder reports, because they show effort. A program with strong outputs and weak outcomes is doing things without changing anything. The ToC is what reveals that mismatch.

Short-term outcomes: changes in 3 to 6 months

Short-term outcomes are changes in participants' knowledge, attitudes, or skills, usually visible within 3 to 6 months of the activity. "Participants demonstrate budget-planning skill" is a short-term outcome. "Participants are saving money" is not. The distinction matters because short-term outcomes are typically captured through pre/post surveys and skill assessments, while later outcomes need follow-up.

Long-term outcomes: changes in 6 to 18 months

Long-term outcomes are changes in behavior, practice, or status. Sustained employment, reduced ER visits, on-time grade promotion. They take longer to manifest and require longitudinal data. This is where unique stakeholder IDs and a longitudinal survey design start to matter, because asking the same person again at month 12 is the only way to verify the outcome held.

Impact: population-level change

Impact is the broadest claim: reduced unemployment in a region, narrower achievement gap in a district, lower disease burden in a community. Most programs contribute to impact rather than cause it single-handedly. The honest way to write impact in a ToC uses contribution language, not causation language. Funders increasingly prefer this framing because it survives scrutiny.

.png)